Unsupervised FAQ Retrieval with Question Generation and BERT

Yosi Mass, Boaz Carmeli, Haggai Roitman, David Konopnicki

Information Retrieval and Text Mining Short Paper

Session 1B: Jul 6

(06:00-07:00 GMT)

Session 3A: Jul 6

(12:00-13:00 GMT)

Abstract:

We focus on the task of Frequently Asked Questions (FAQ) retrieval. A given user query can be matched against the questions and/or the answers in the FAQ. We present a fully unsupervised method that exploits the FAQ pairs to train two BERT models. The two models match user queries to FAQ answers and questions, respectively. We alleviate the missing labeled data of the latter by automatically generating high-quality question paraphrases. We show that our model is on par and even outperforms supervised models on existing datasets.

You can open the

pre-recorded video

in a separate window.

NOTE: The SlidesLive video may display a random order of the authors.

The correct author list is shown at the top of this webpage.

Similar Papers

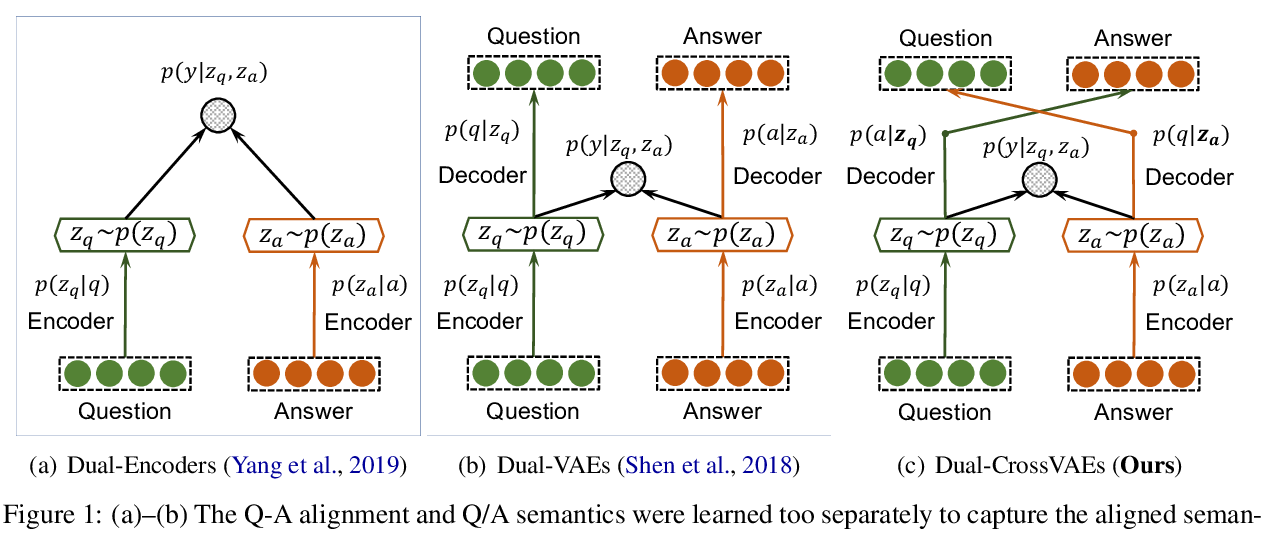

Crossing Variational Autoencoders for Answer Retrieval

Wenhao Yu, Lingfei Wu, Qingkai Zeng, Shu Tao, Yu Deng, Meng Jiang,

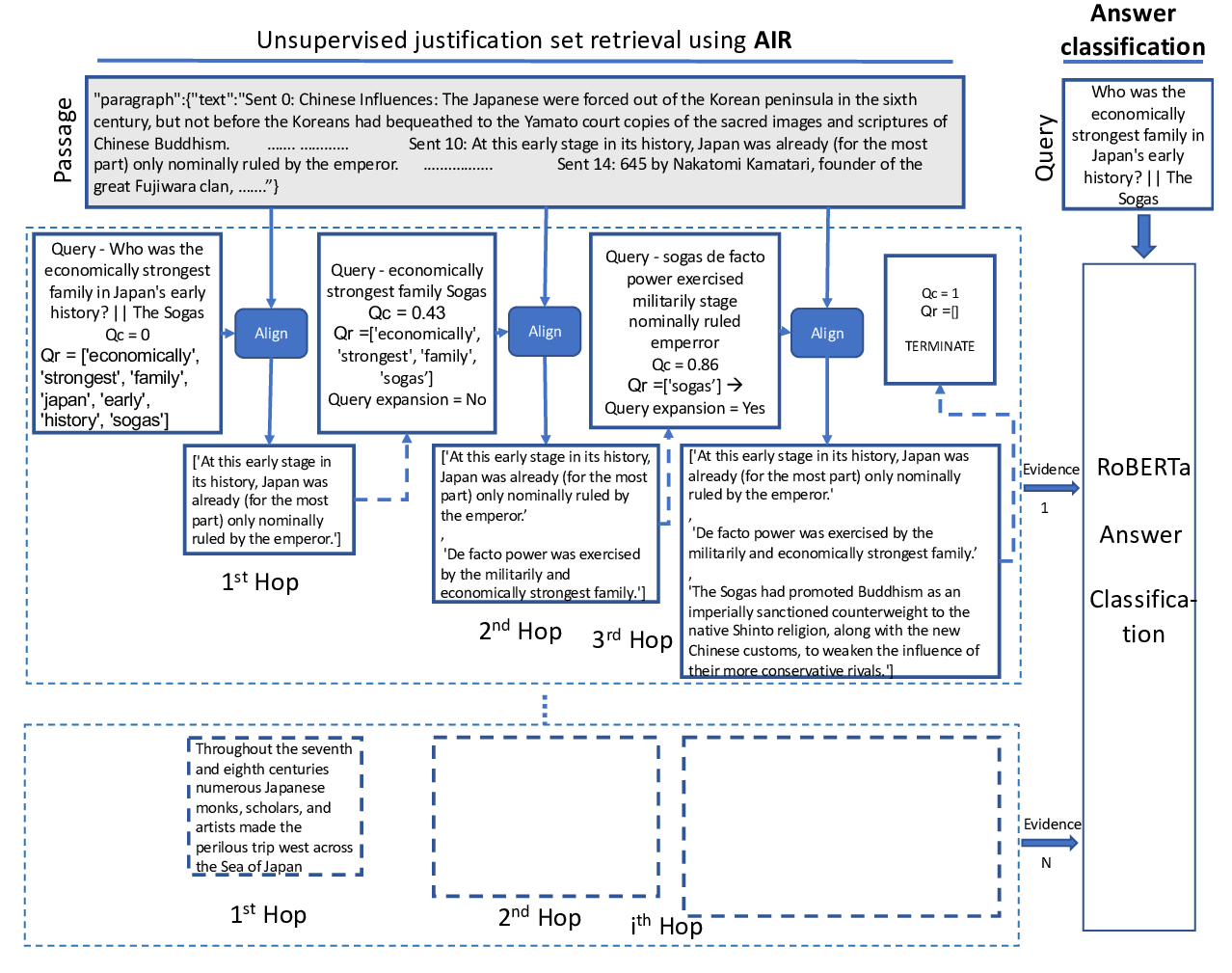

Unsupervised Alignment-based Iterative Evidence Retrieval for Multi-hop Question Answering

Vikas Yadav, Steven Bethard, Mihai Surdeanu,

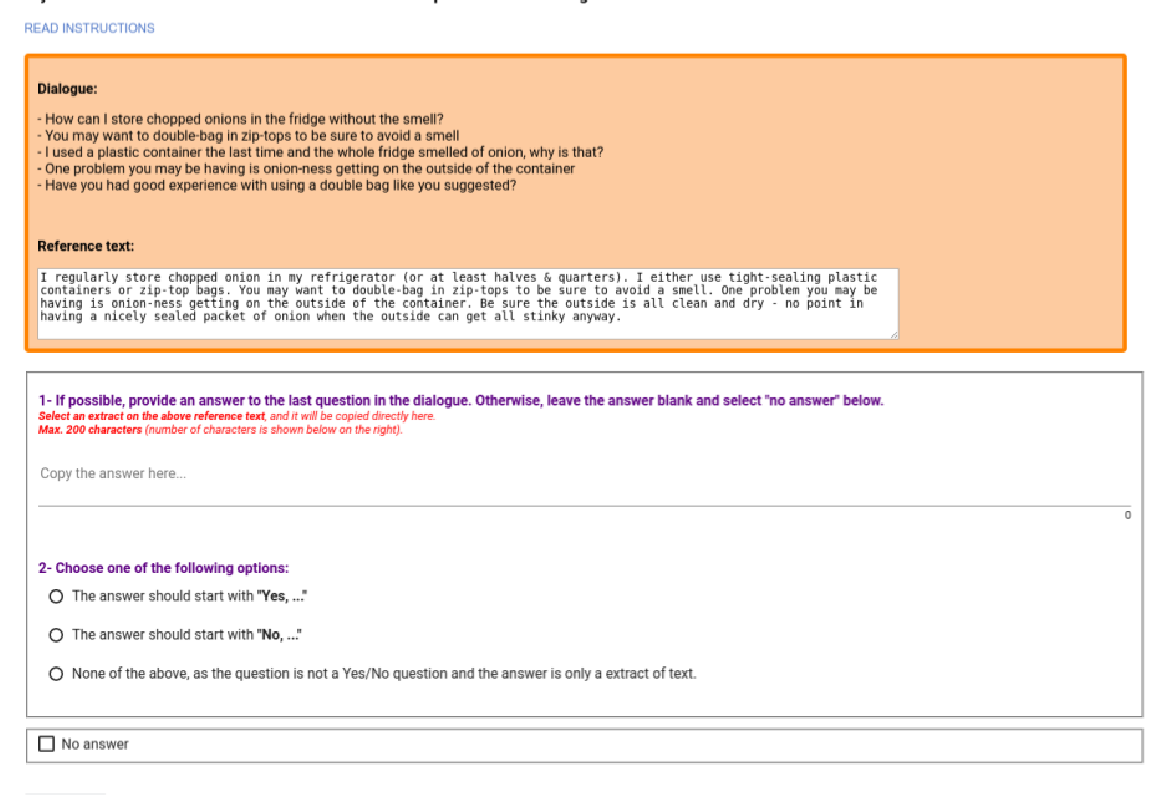

DoQA - Accessing Domain-Specific FAQs via Conversational QA

Jon Ander Campos, Arantxa Otegi, Aitor Soroa, Jan Deriu, Mark Cieliebak, Eneko Agirre,

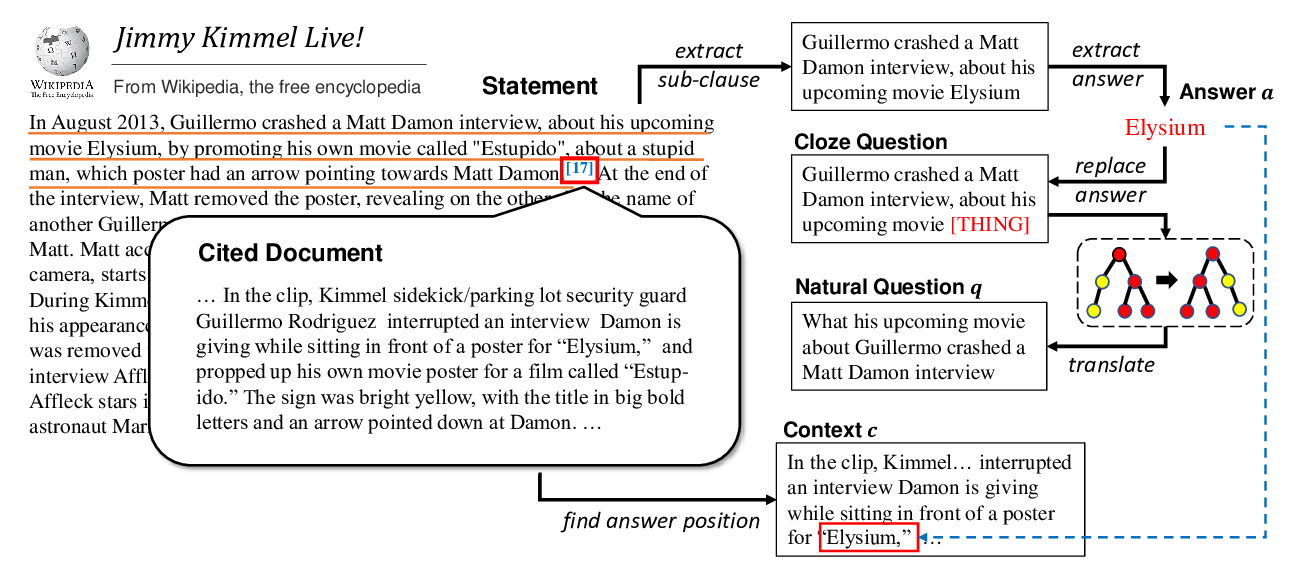

Harvesting and Refining Question-Answer Pairs for Unsupervised QA

Zhongli Li, Wenhui Wang, Li Dong, Furu Wei, Ke Xu,