Dependency Graph Enhanced Dual-transformer Structure for Aspect-based Sentiment Classification

Hao Tang, Donghong Ji, Chenliang Li, Qiji Zhou

Machine Learning for NLP Long Paper

Session 11B: Jul 8

(06:00-07:00 GMT)

Session 12A: Jul 8

(08:00-09:00 GMT)

Abstract:

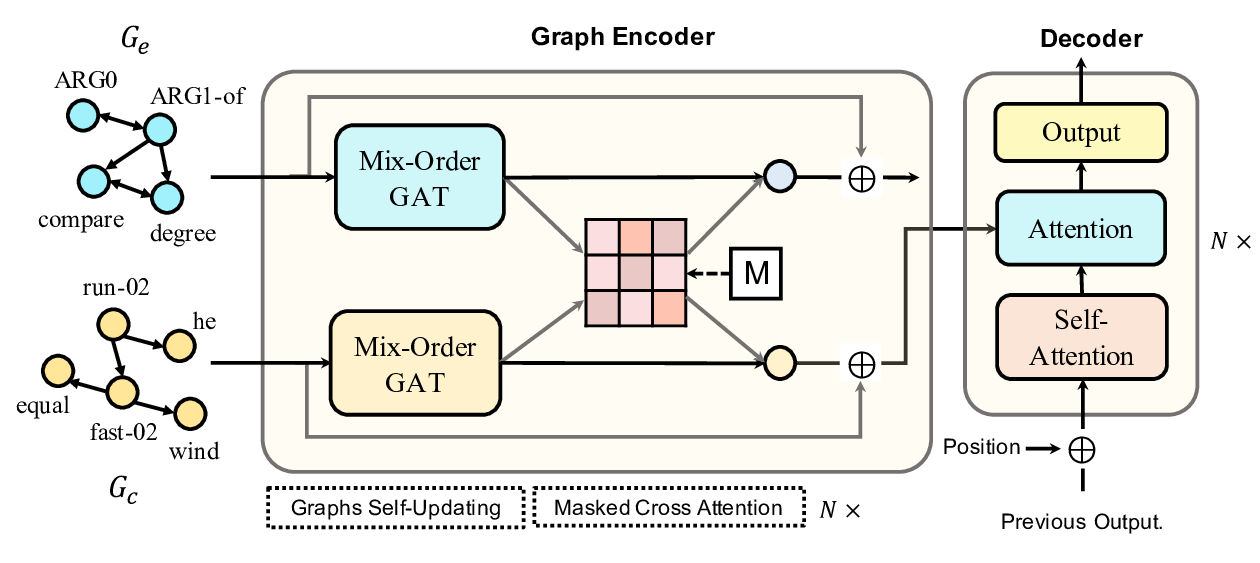

Aspect-based sentiment classification is a popular task aimed at identifying the corresponding emotion of a specific aspect. One sentence may contain various sentiments for different aspects. Many sophisticated methods such as attention mechanism and Convolutional Neural Networks (CNN) have been widely employed for handling this challenge. Recently, semantic dependency tree implemented by Graph Convolutional Networks (GCN) is introduced to describe the inner connection between aspects and the associated emotion words. But the improvement is limited due to the noise and instability of dependency trees. To this end, we propose a dependency graph enhanced dual-transformer network (named DGEDT) by jointly considering the flat representations learnt from Transformer and graph-based representations learnt from the corresponding dependency graph in an iterative interaction manner. Specifically, a dual-transformer structure is devised in DGEDT to support mutual reinforcement between the flat representation learning and graph-based representation learning. The idea is to allow the dependency graph to guide the representation learning of the transformer encoder and vice versa. The results on five datasets demonstrate that the proposed DGEDT outperforms all state-of-the-art alternatives with a large margin.

You can open the

pre-recorded video

in a separate window.

NOTE: The SlidesLive video may display a random order of the authors.

The correct author list is shown at the top of this webpage.

Similar Papers

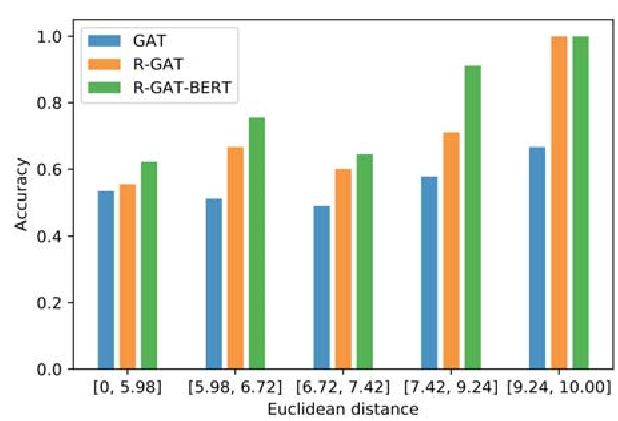

Relational Graph Attention Network for Aspect-based Sentiment Analysis

Kai Wang, Weizhou Shen, Yunyi Yang, Xiaojun Quan, Rui Wang,

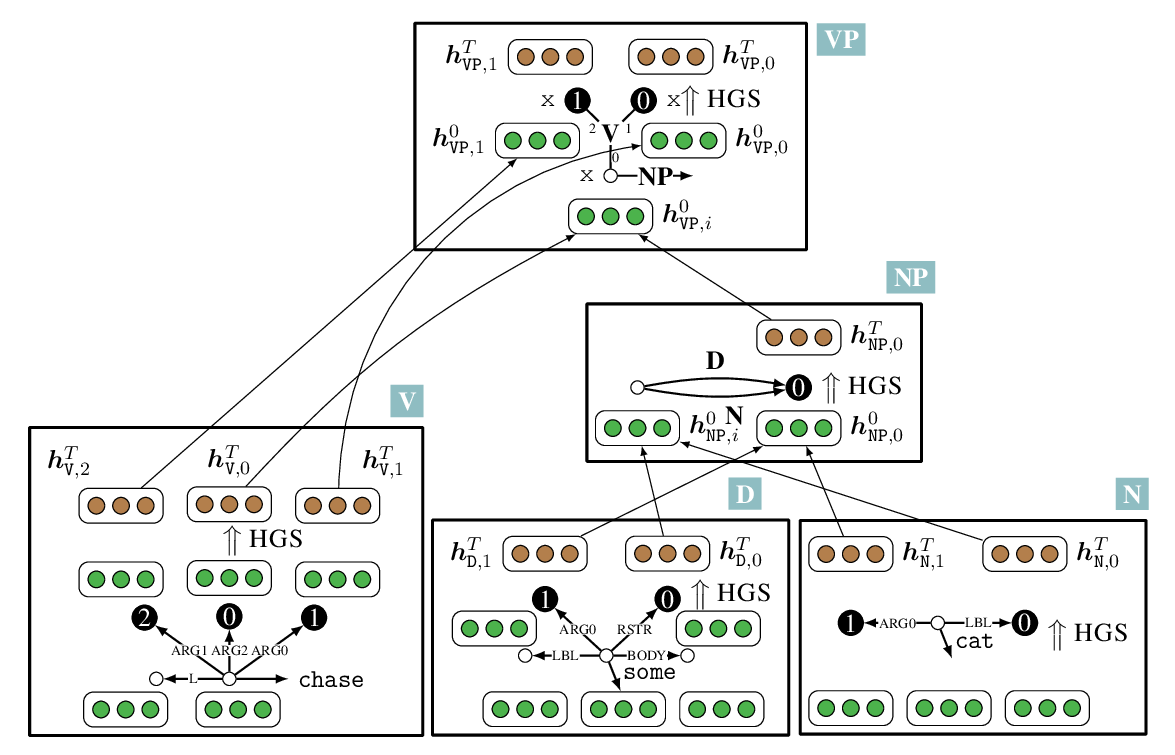

Line Graph Enhanced AMR-to-Text Generation with Mix-Order Graph Attention Networks

Yanbin Zhao, Lu Chen, Zhi Chen, Ruisheng Cao, Su Zhu, Kai Yu,

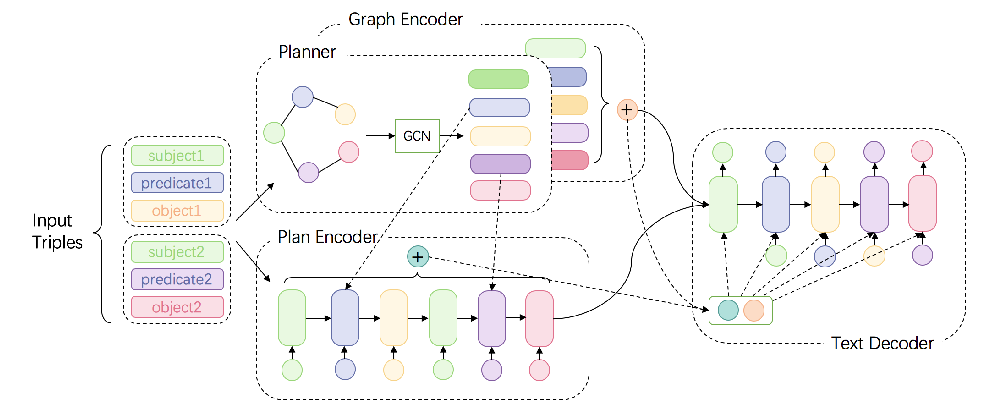

Bridging the Structural Gap Between Encoding and Decoding for Data-To-Text Generation

Chao Zhao, Marilyn Walker, Snigdha Chaturvedi,