RPD: A Distance Function Between Word Embeddings

Xuhui Zhou, Shujian Huang, Zaixiang Zheng

Student Research Workshop SRW Paper

Session 1B: Jul 6

(06:00-07:00 GMT)

Session 9B: Jul 7

(18:00-19:00 GMT)

Abstract:

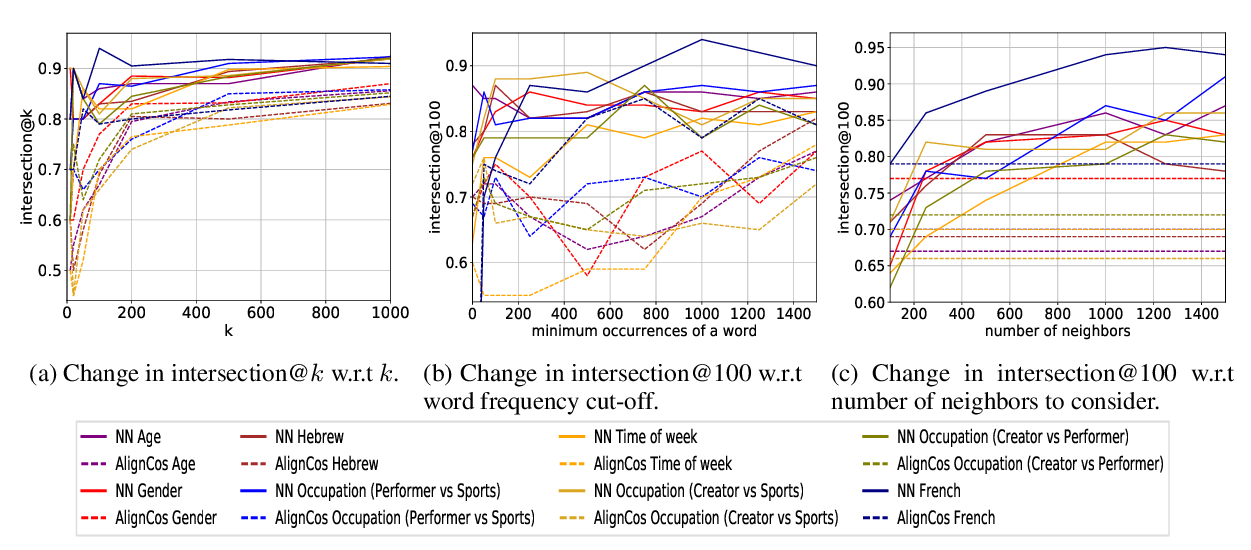

It is well-understood that different algorithms, training processes, and corpora produce different word embeddings. However, less is known about the relation between different embedding spaces, i.e. how far different sets of em-beddings deviate from each other. In this paper, we propose a novel metric called Relative Pairwise Inner Product Distance (RPD) to quantify the distance between different sets of word embeddings. This unitary-invariant metric has a unified scale for comparing different sets of word embeddings. Based on the properties of RPD, we study the relations of word embeddings of different algorithms systematically and investigate the influence of different training processes and corpora. The results shed light on the poorly understood word embeddings and justify RPD as a measure of the distance of embedding space.

You can open the

pre-recorded video

in a separate window.

NOTE: The SlidesLive video may display a random order of the authors.

The correct author list is shown at the top of this webpage.

Similar Papers

Simple, Interpretable and Stable Method for Detecting Words with Usage Change across Corpora

Hila Gonen, Ganesh Jawahar, Djamé Seddah, Yoav Goldberg,

Stolen Probability: A Structural Weakness of Neural Language Models

David Demeter, Gregory Kimmel, Doug Downey,

Spying on Your Neighbors: Fine-grained Probing of Contextual Embeddings for Information about Surrounding Words

Josef Klafka, Allyson Ettinger,