Multi-Domain Neural Machine Translation with Word-Level Adaptive Layer-wise Domain Mixing

Haoming Jiang, Chen Liang, Chong Wang, Tuo Zhao

Machine Translation Long Paper

Session 3A: Jul 6

(12:00-13:00 GMT)

Session 4B: Jul 6

(18:00-19:00 GMT)

Abstract:

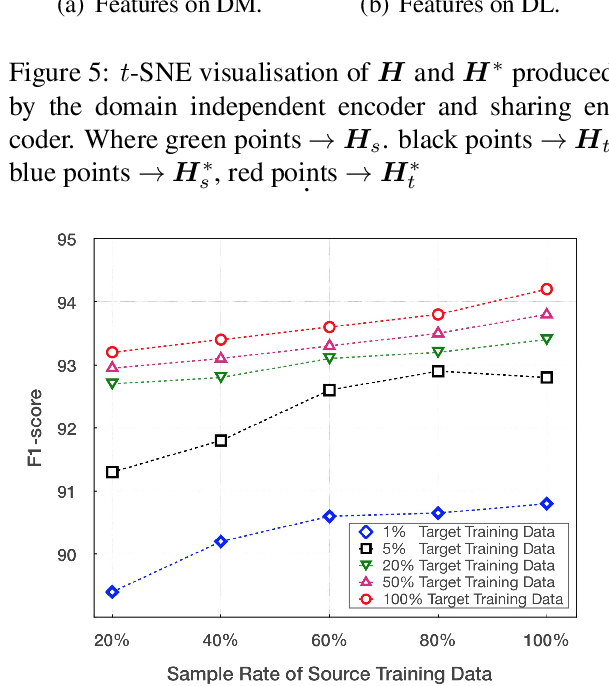

Many multi-domain neural machine translation (NMT) models achieve knowledge transfer by enforcing one encoder to learn shared embedding across domains. However, this design lacks adaptation to individual domains. To overcome this limitation, we propose a novel multi-domain NMT model using individual modules for each domain, on which we apply word-level, adaptive and layer-wise domain mixing. We first observe that words in a sentence are often related to multiple domains. Hence, we assume each word has a domain proportion, which indicates its domain preference. Then word representations are obtained by mixing their embedding in individual domains based on their domain proportions. We show this can be achieved by carefully designing multi-head dot-product attention modules for different domains, and eventually taking weighted averages of their parameters by word-level layer-wise domain proportions. Through this, we can achieve effective domain knowledge sharing and capture fine-grained domain-specific knowledge as well. Our experiments show that our proposed model outperforms existing ones in several NMT tasks.

You can open the

pre-recorded video

in a separate window.

NOTE: The SlidesLive video may display a random order of the authors.

The correct author list is shown at the top of this webpage.

Similar Papers

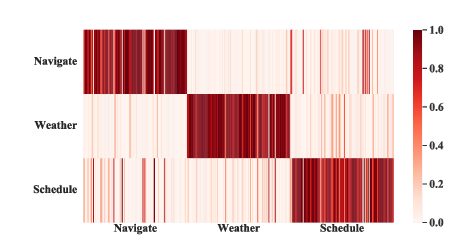

Dynamic Fusion Network for Multi-Domain End-to-end Task-Oriented Dialog

Libo Qin, Xiao Xu, Wanxiang Che, Yue Zhang, Ting Liu,

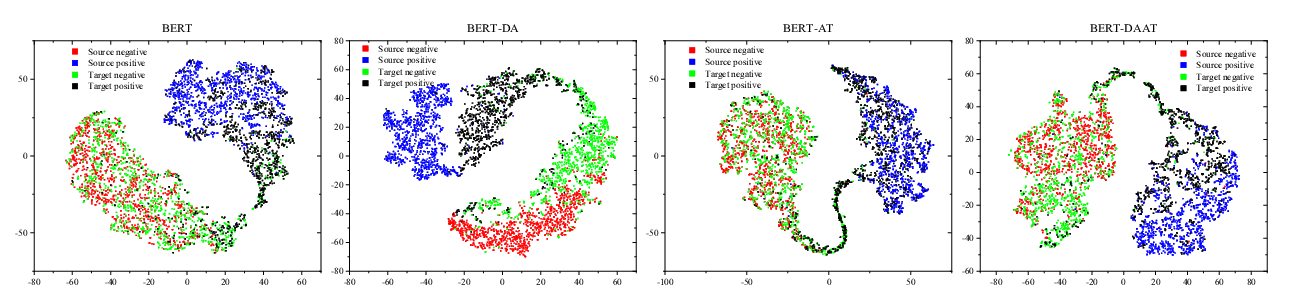

Adversarial and Domain-Aware BERT for Cross-Domain Sentiment Analysis

Chunning Du, Haifeng Sun, Jingyu Wang, Qi Qi, Jianxin Liao,

Coupling Distant Annotation and Adversarial Training for Cross-Domain Chinese Word Segmentation

Ning Ding, Dingkun Long, Guangwei Xu, Muhua Zhu, Pengjun Xie, Xiaobin Wang, Haitao Zheng,

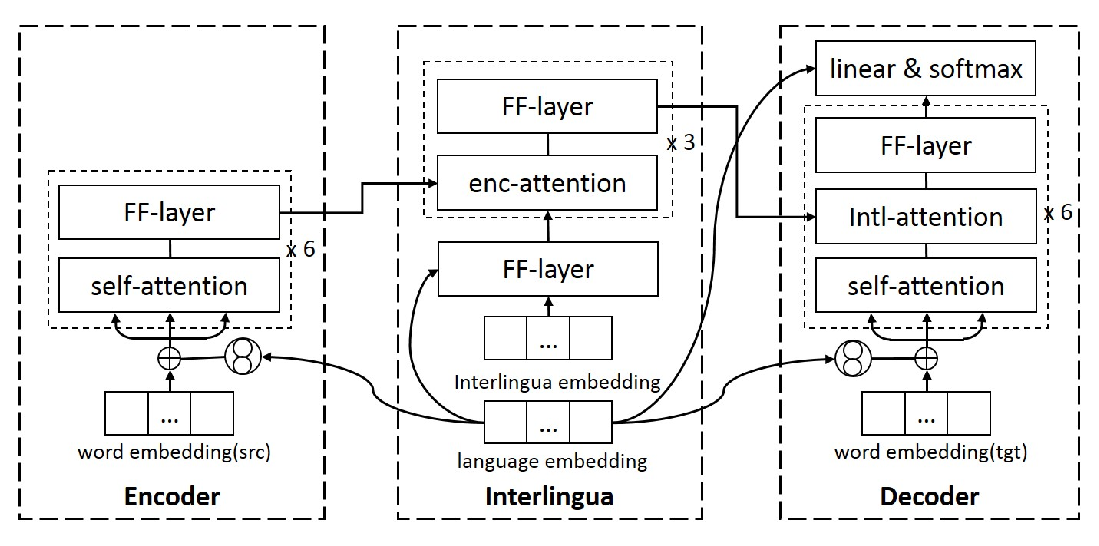

Language-aware Interlingua for Multilingual Neural Machine Translation

Changfeng Zhu, Heng Yu, Shanbo Cheng, Weihua Luo,