Contextual Embeddings: When Are They Worth It?

Simran Arora, Avner May, Jian Zhang, Christopher Ré

Machine Learning for NLP Short Paper

Session 4B: Jul 6

(18:00-19:00 GMT)

Session 5B: Jul 6

(21:00-22:00 GMT)

Abstract:

We study the settings for which deep contextual embeddings (e.g., BERT) give large improvements in performance relative to classic pretrained embeddings (e.g., GloVe), and an even simpler baseline---random word embeddings---focusing on the impact of the training set size and the linguistic properties of the task. Surprisingly, we find that both of these simpler baselines can match contextual embeddings on industry-scale data, and often perform within 5 to 10% accuracy (absolute) on benchmark tasks. Furthermore, we identify properties of data for which contextual embeddings give particularly large gains: language containing complex structure, ambiguous word usage, and words unseen in training.

You can open the

pre-recorded video

in a separate window.

NOTE: The SlidesLive video may display a random order of the authors.

The correct author list is shown at the top of this webpage.

Similar Papers

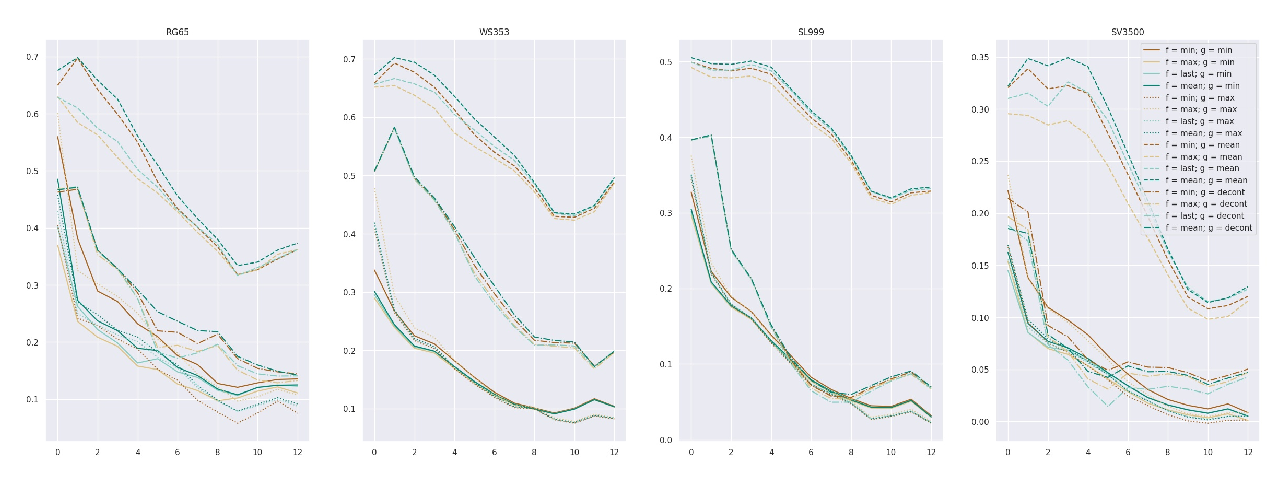

Interpreting Pretrained Contextualized Representations via Reductions to Static Embeddings

Rishi Bommasani, Kelly Davis, Claire Cardie,

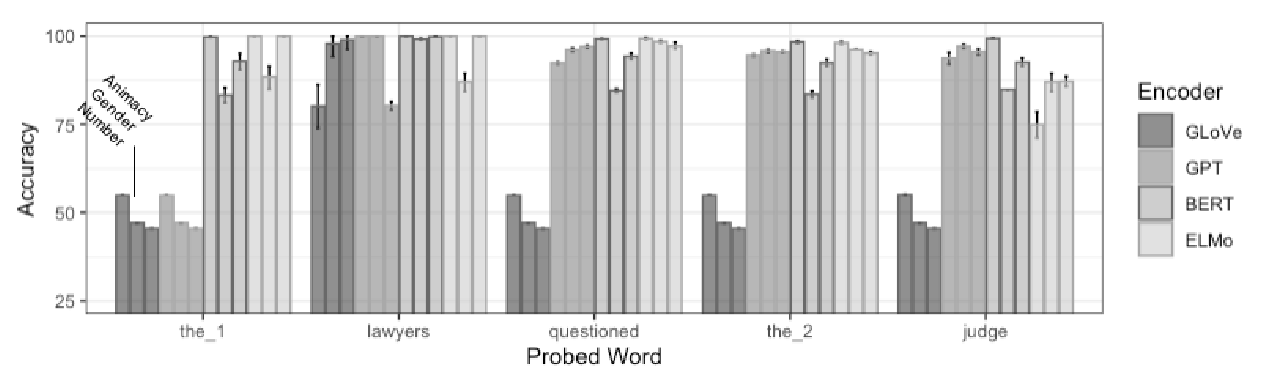

Spying on Your Neighbors: Fine-grained Probing of Contextual Embeddings for Information about Surrounding Words

Josef Klafka, Allyson Ettinger,

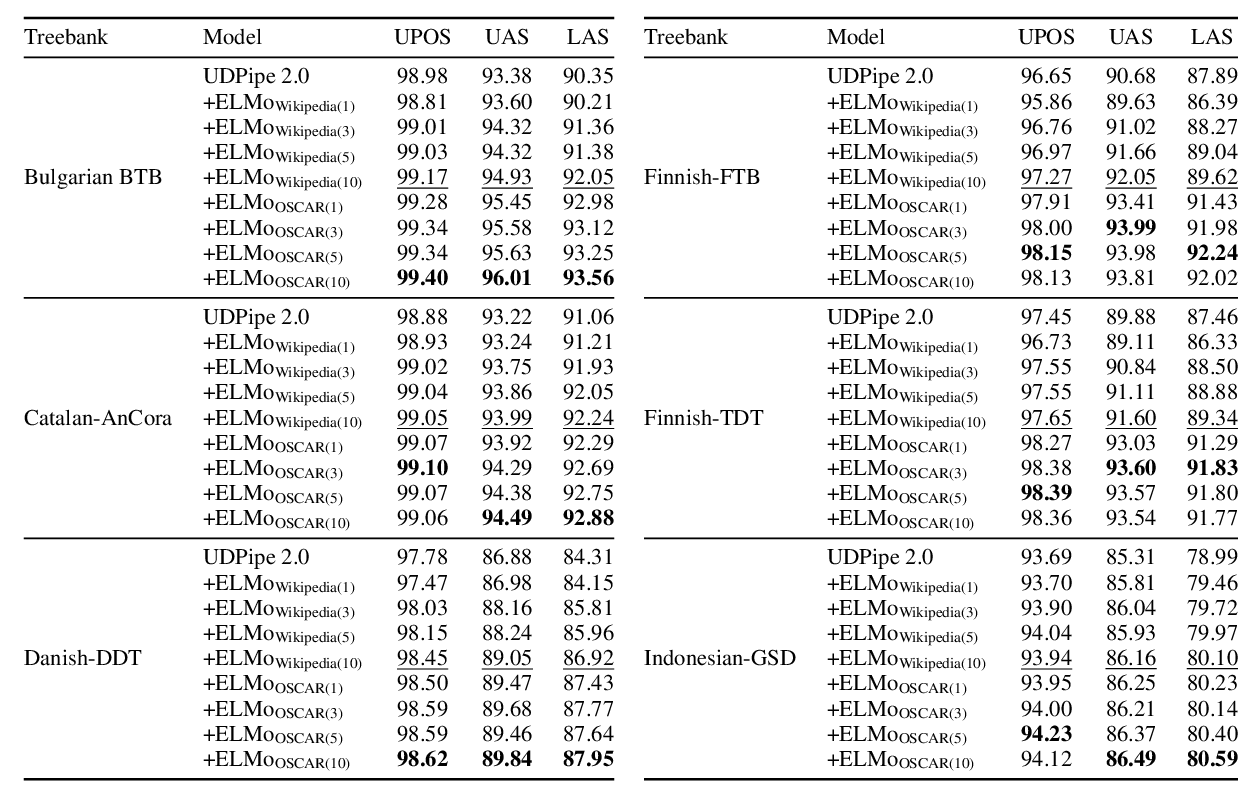

A Monolingual Approach to Contextualized Word Embeddings for Mid-Resource Languages

Pedro Javier Ortiz Suárez, Laurent Romary, Benoît Sagot,

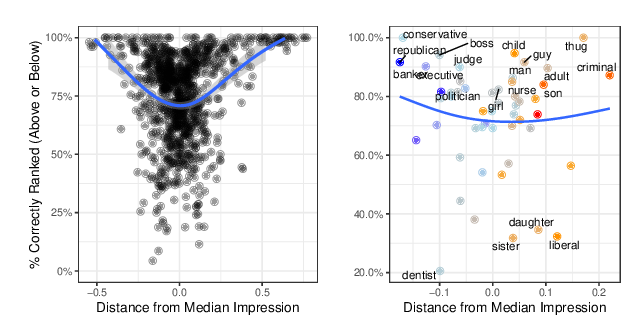

When do Word Embeddings Accurately Reflect Surveys on our Beliefs About People?

Kenneth Joseph, Jonathan Morgan,