Multidirectional Associative Optimization of Function-Specific Word Representations

Daniela Gerz, Ivan Vulić, Marek Rei, Roi Reichart, Anna Korhonen

Semantics: Lexical Long Paper

Session 4B: Jul 6

(18:00-19:00 GMT)

Session 5B: Jul 6

(21:00-22:00 GMT)

Abstract:

We present a neural framework for learning associations between interrelated groups of words such as the ones found in Subject-Verb-Object (SVO) structures. Our model induces a joint function-specific word vector space, where vectors of e.g. plausible SVO compositions lie close together. The model retains information about word group membership even in the joint space, and can thereby effectively be applied to a number of tasks reasoning over the SVO structure. We show the robustness and versatility of the proposed framework by reporting state-of-the-art results on the tasks of estimating selectional preference and event similarity. The results indicate that the combinations of representations learned with our task-independent model outperform task-specific architectures from prior work, while reducing the number of parameters by up to 95%.

You can open the

pre-recorded video

in a separate window.

NOTE: The SlidesLive video may display a random order of the authors.

The correct author list is shown at the top of this webpage.

Similar Papers

Stolen Probability: A Structural Weakness of Neural Language Models

David Demeter, Gregory Kimmel, Doug Downey,

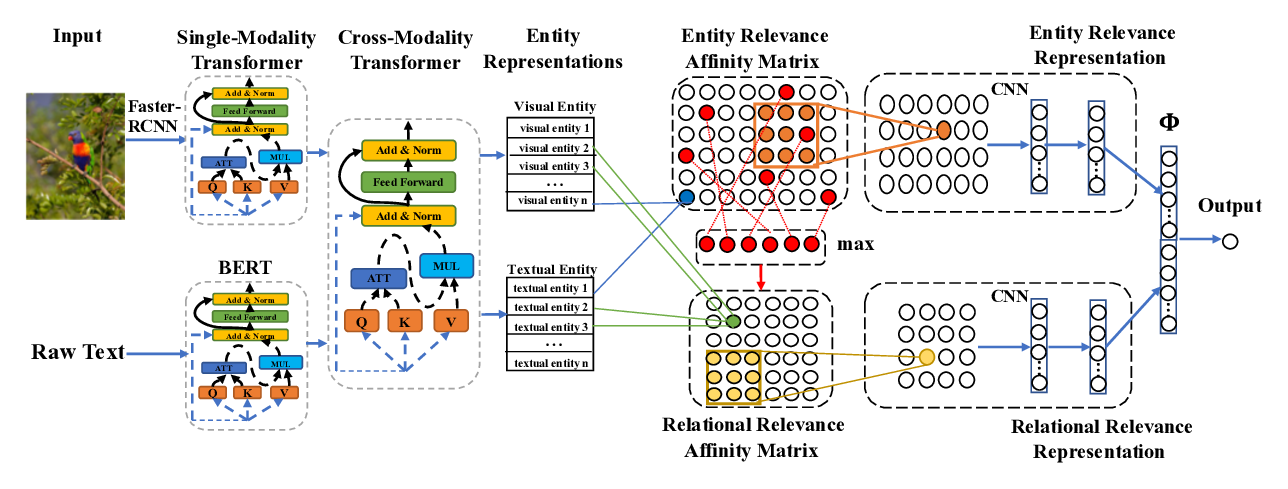

Cross-Modality Relevance for Reasoning on Language and Vision

Chen Zheng, Quan Guo, Parisa Kordjamshidi,

To Pretrain or Not to Pretrain: Examining the Benefits of Pretrainng on Resource Rich Tasks

Sinong Wang, Madian Khabsa, Hao Ma,