Gender Bias in Multilingual Embeddings and Cross-Lingual Transfer

Jieyu Zhao, Subhabrata Mukherjee, Saghar Hosseini, Kai-Wei Chang, Ahmed Hassan Awadallah

Ethics and NLP Long Paper

Session 6A: Jul 7

(05:00-06:00 GMT)

Session 10B: Jul 7

(21:00-22:00 GMT)

Abstract:

Multilingual representations embed words from many languages into a single semantic space such that words with similar meanings are close to each other regardless of the language. These embeddings have been widely used in various settings, such as cross-lingual transfer, where a natural language processing (NLP) model trained on one language is deployed to another language. While the cross-lingual transfer techniques are powerful, they carry gender bias from the source to target languages. In this paper, we study gender bias in multilingual embeddings and how it affects transfer learning for NLP applications. We create a multilingual dataset for bias analysis and propose several ways for quantifying bias in multilingual representations from both the intrinsic and extrinsic perspectives. Experimental results show that the magnitude of bias in the multilingual representations changes differently when we align the embeddings to different target spaces and that the alignment direction can also have an influence on the bias in transfer learning. We further provide recommendations for using the multilingual word representations for downstream tasks.

You can open the

pre-recorded video

in a separate window.

NOTE: The SlidesLive video may display a random order of the authors.

The correct author list is shown at the top of this webpage.

Similar Papers

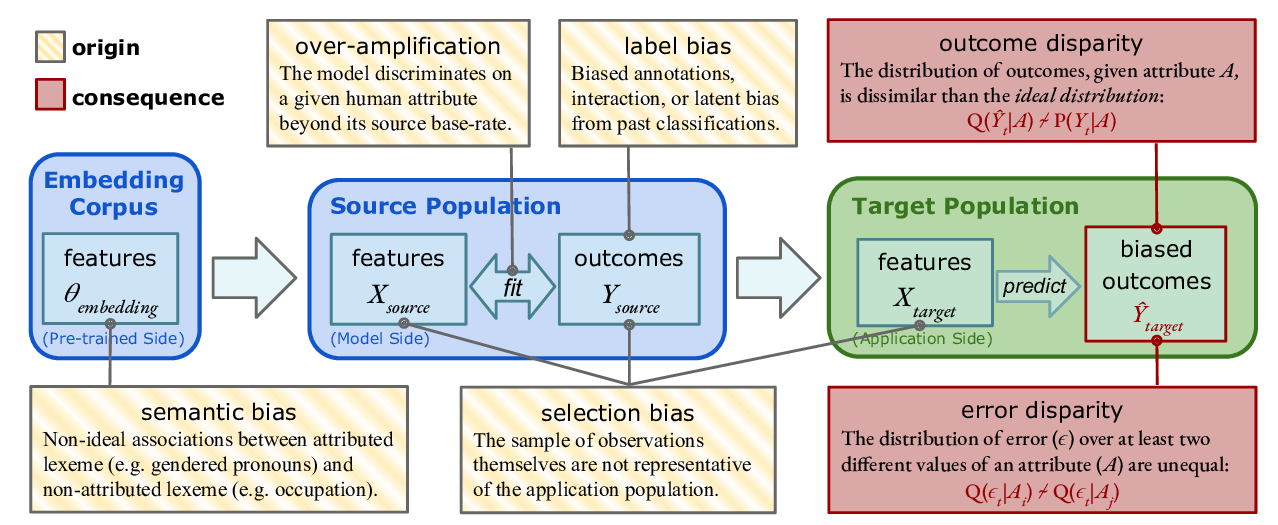

Predictive Biases in Natural Language Processing Models: A Conceptual Framework and Overview

Deven Santosh Shah, H. Andrew Schwartz, Dirk Hovy,

Mitigating Gender Bias Amplification in Distribution by Posterior Regularization

Shengyu Jia, Tao Meng, Jieyu Zhao, Kai-Wei Chang,

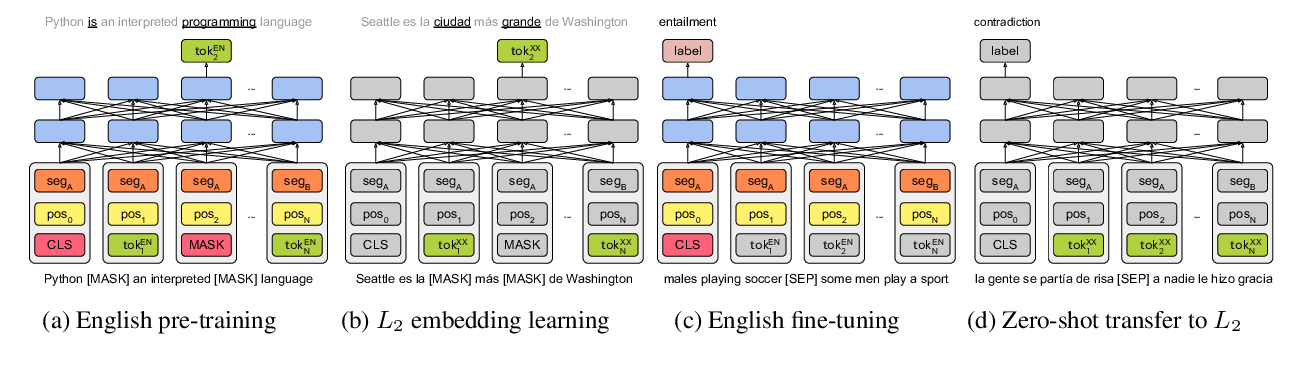

On the Cross-lingual Transferability of Monolingual Representations

Mikel Artetxe, Sebastian Ruder, Dani Yogatama,

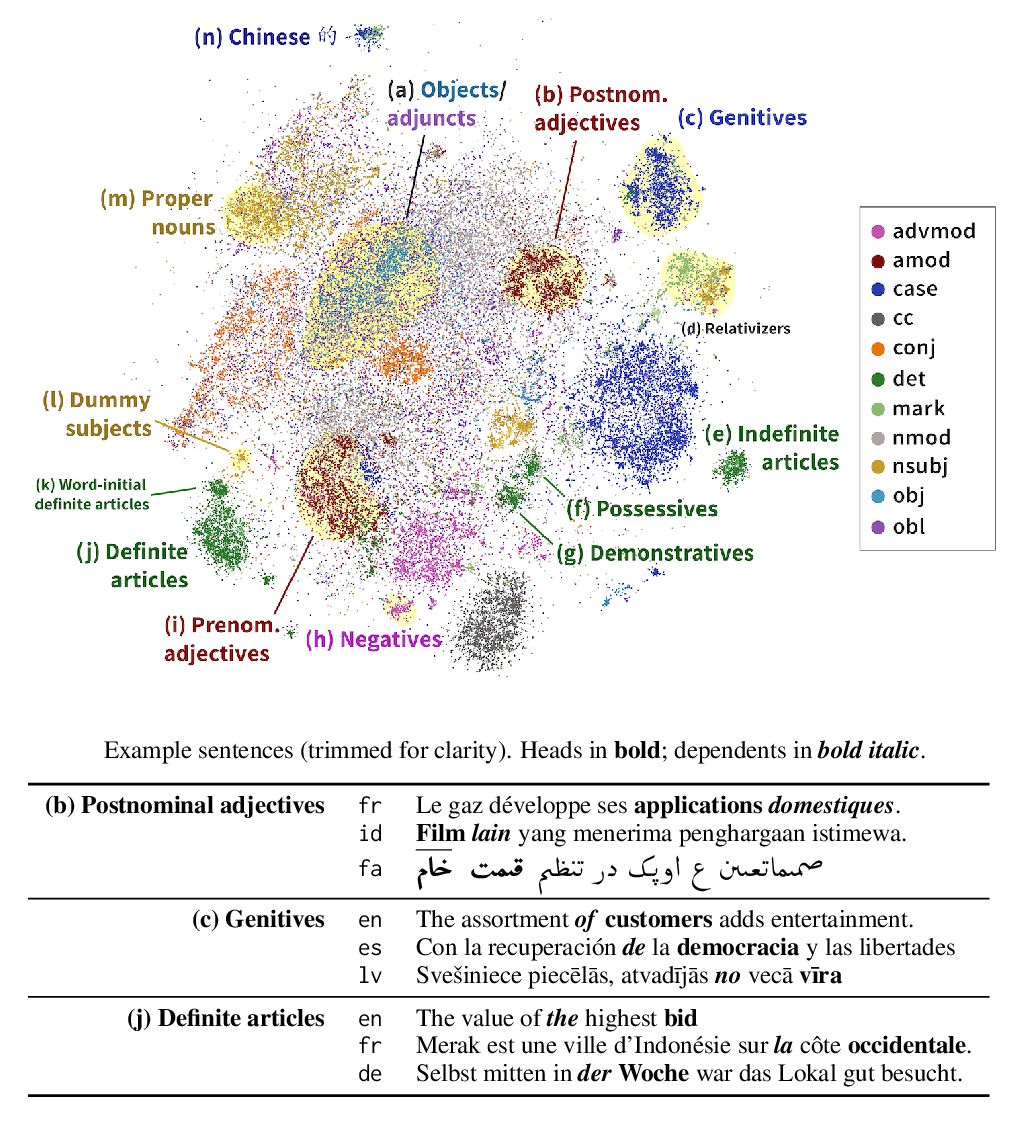

Finding Universal Grammatical Relations in Multilingual BERT

Ethan A. Chi, John Hewitt, Christopher D. Manning,