Information-Theoretic Probing for Linguistic Structure

Tiago Pimentel, Josef Valvoda, Rowan Hall Maudslay, Ran Zmigrod, Adina Williams, Ryan Cotterell

Interpretability and Analysis of Models for NLP Long Paper

Session 8B: Jul 7

(13:00-14:00 GMT)

Session 9A: Jul 7

(17:00-18:00 GMT)

Abstract:

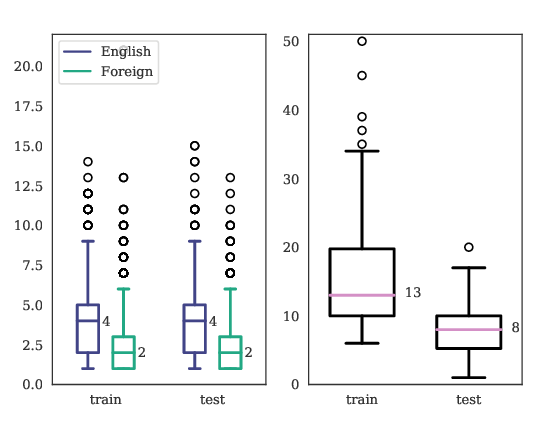

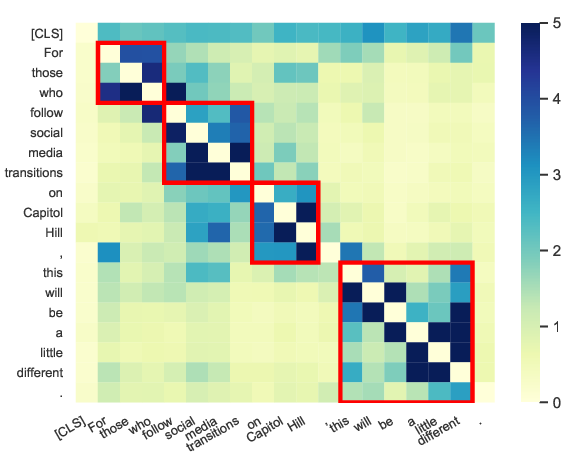

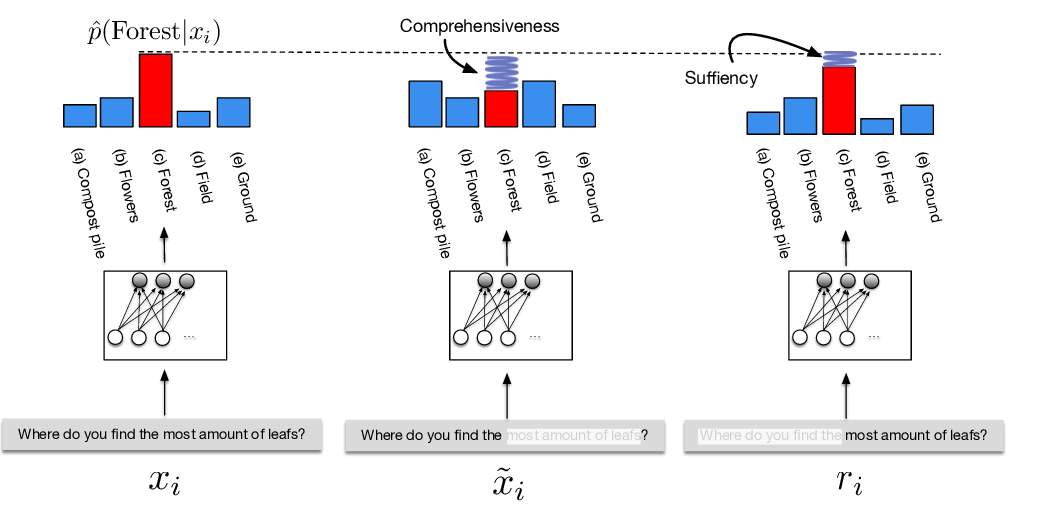

The success of neural networks on a diverse set of NLP tasks has led researchers to question how much these networks actually ``know'' about natural language. Probes are a natural way of assessing this. When probing, a researcher chooses a linguistic task and trains a supervised model to predict annotations in that linguistic task from the network's learned representations. If the probe does well, the researcher may conclude that the representations encode knowledge related to the task. A commonly held belief is that using simpler models as probes is better; the logic is that simpler models will identify linguistic structure, but not learn the task itself. We propose an information-theoretic operationalization of probing as estimating mutual information that contradicts this received wisdom: one should always select the highest performing probe one can, even if it is more complex, since it will result in a tighter estimate, and thus reveal more of the linguistic information inherent in the representation. The experimental portion of our paper focuses on empirically estimating the mutual information between a linguistic property and BERT, comparing these estimates to several baselines. We evaluate on a set of ten typologically diverse languages often underrepresented in NLP research---plus English---totalling eleven languages. Our implementation is available in https://github.com/rycolab/info-theoretic-probing.

You can open the

pre-recorded video

in a separate window.

NOTE: The SlidesLive video may display a random order of the authors.

The correct author list is shown at the top of this webpage.

Similar Papers

LINSPECTOR: Multilingual Probing Tasks for Word Representations

Gözde Gül Sahin, Clara Vania, Ilia Kuznetsov, Iryna Gurevych,

Perturbed Masking: Parameter-free Probing for Analyzing and Interpreting BERT

Zhiyong Wu, Yun Chen, Ben Kao, Qun Liu,

ERASER: A Benchmark to Evaluate Rationalized NLP Models

Jay DeYoung, Sarthak Jain, Nazneen Fatema Rajani, Eric Lehman, Caiming Xiong, Richard Socher, Byron C. Wallace,

PuzzLing Machines: A Challenge on Learning From Small Data

Gözde Gül Şahin, Yova Kementchedjhieva, Phillip Rust, Iryna Gurevych,