One Size Does Not Fit All: Generating and Evaluating Variable Number of Keyphrases

Xingdi Yuan, Tong Wang, Rui Meng, Khushboo Thaker, Peter Brusilovsky, Daqing He, Adam Trischler

Generation Long Paper

Session 14A: Jul 8

(17:00-18:00 GMT)

Session 15A: Jul 8

(20:00-21:00 GMT)

Abstract:

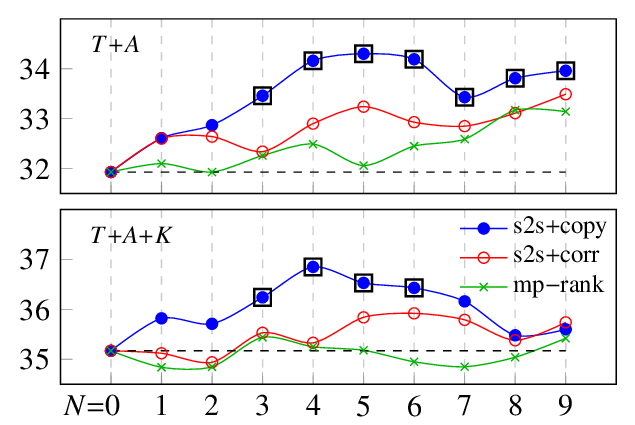

Different texts shall by nature correspond to different number of keyphrases. This desideratum is largely missing from existing neural keyphrase generation models. In this study, we address this problem from both modeling and evaluation perspectives. We first propose a recurrent generative model that generates multiple keyphrases as delimiter-separated sequences. Generation diversity is further enhanced with two novel techniques by manipulating decoder hidden states. In contrast to previous approaches, our model is capable of generating diverse keyphrases and controlling number of outputs. We further propose two evaluation metrics tailored towards the variable-number generation. We also introduce a new dataset StackEx that expands beyond the only existing genre (i.e., academic writing) in keyphrase generation tasks. With both previous and new evaluation metrics, our model outperforms strong baselines on all datasets.

You can open the

pre-recorded video

in a separate window.

NOTE: The SlidesLive video may display a random order of the authors.

The correct author list is shown at the top of this webpage.

Similar Papers

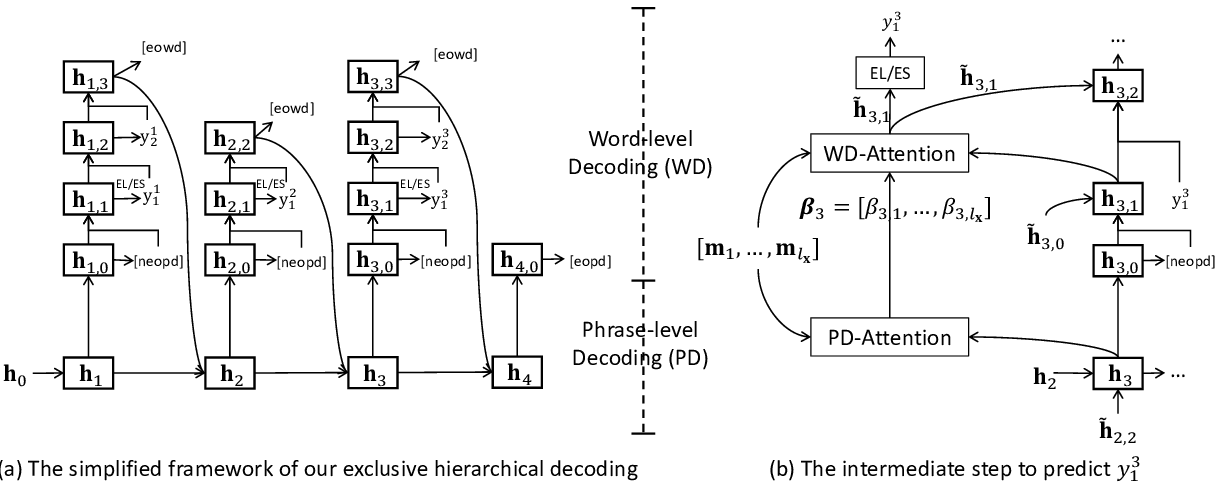

Exclusive Hierarchical Decoding for Deep Keyphrase Generation

Wang Chen, Hou Pong Chan, Piji Li, Irwin King,

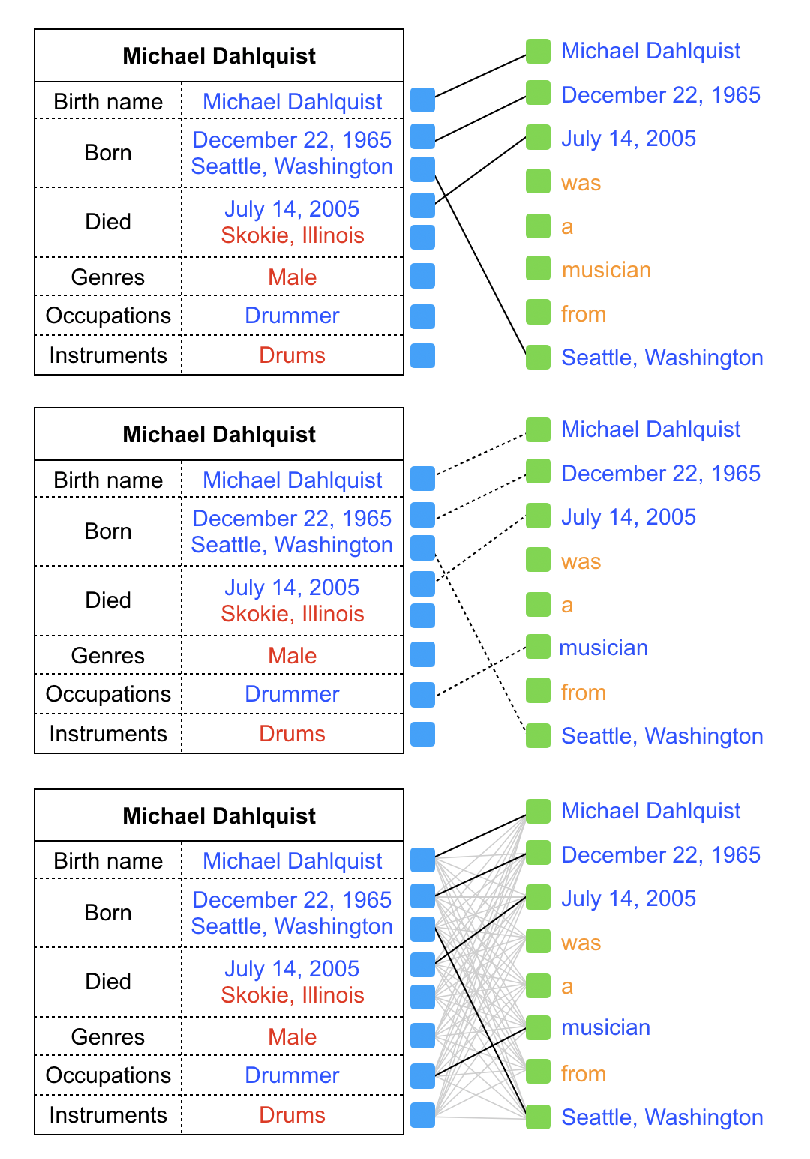

Towards Faithful Neural Table-to-Text Generation with Content-Matching Constraints

Zhenyi Wang, Xiaoyang Wang, Bang An, Dong Yu, Changyou Chen,

An Empirical Comparison of Unsupervised Constituency Parsing Methods

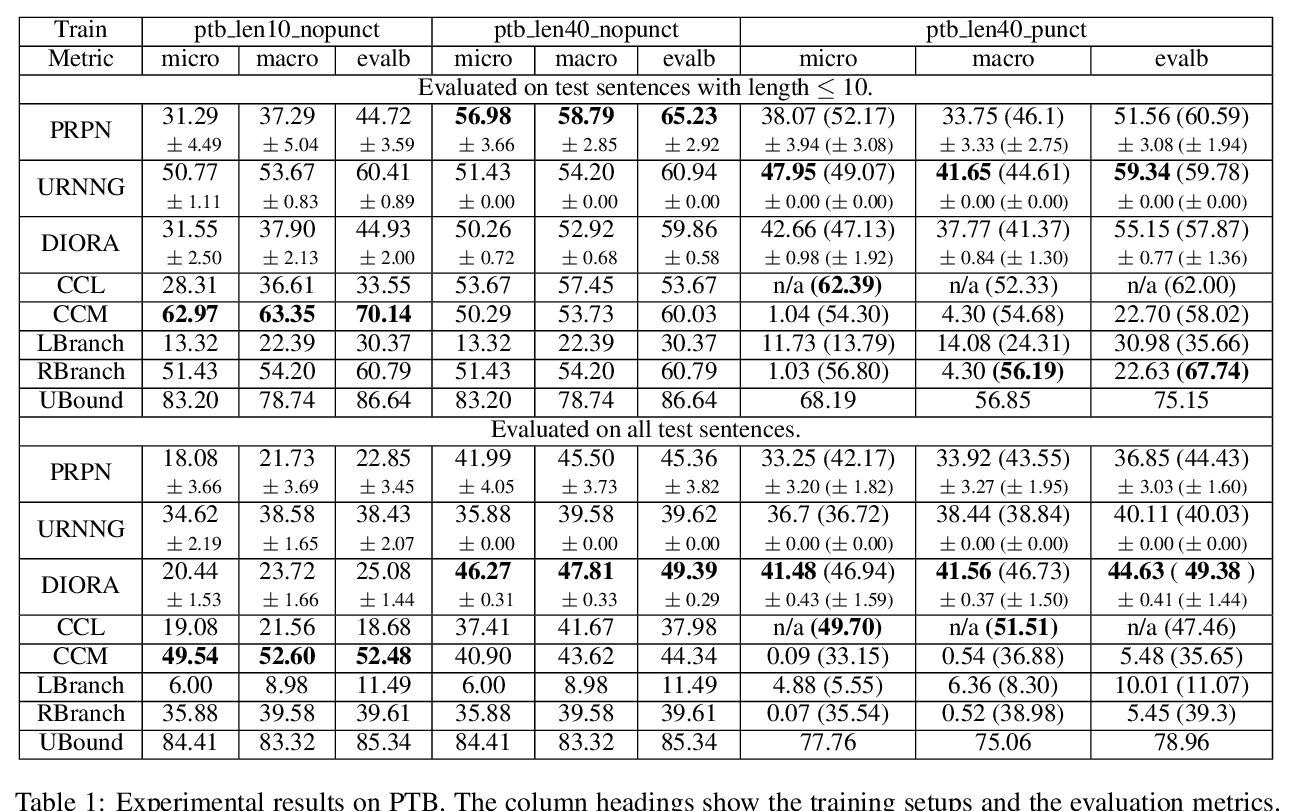

Jun Li, Yifan Cao, Jiong Cai, Yong Jiang, Kewei Tu,