Clinical Concept Linking with Contextualized Neural Representations

Elliot Schumacher, Andriy Mulyar, Mark Dredze

NLP Applications Short Paper

Session 14B: Jul 8

(18:00-19:00 GMT)

Session 15B: Jul 8

(21:00-22:00 GMT)

Abstract:

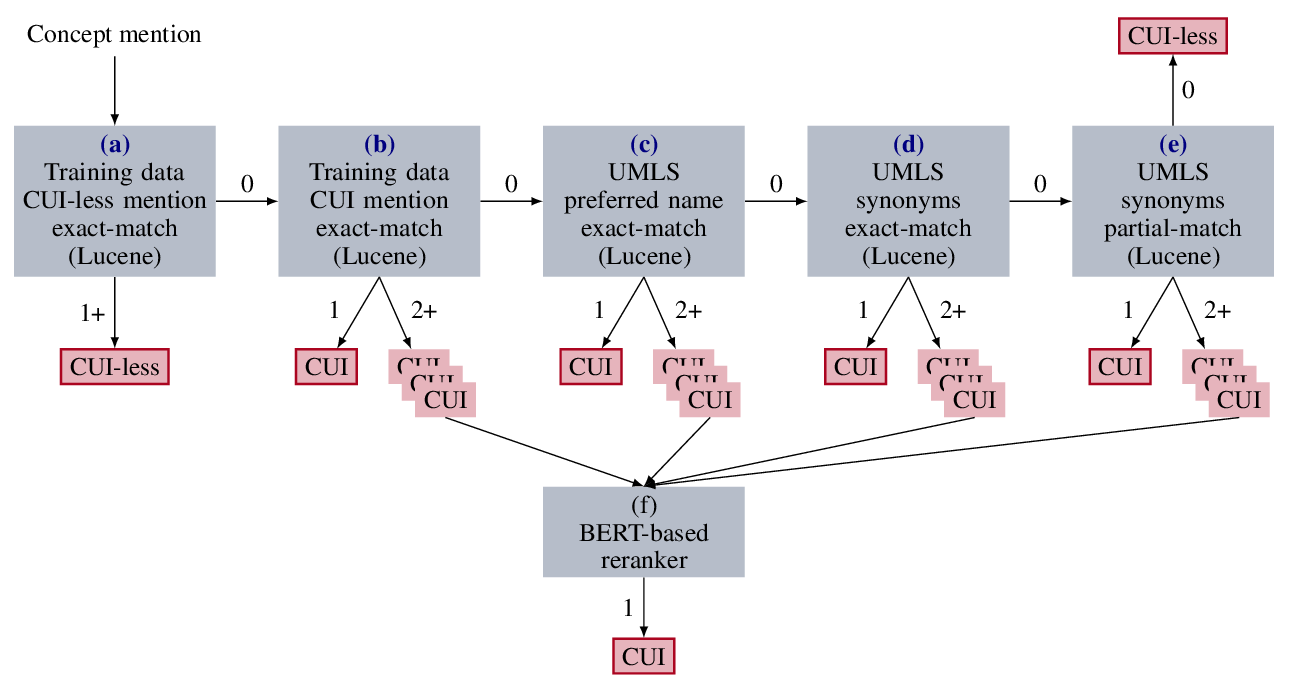

In traditional approaches to entity linking, linking decisions are based on three sources of information -- the similarity of the mention string to an entity's name, the similarity of the context of the document to the entity, and broader information about the knowledge base (KB). In some domains, there is little contextual information present in the KB and thus we rely more heavily on mention string similarity. We consider one example of this, concept linking, which seeks to link mentions of medical concepts to a medical concept ontology. We propose an approach to concept linking that leverages recent work in contextualized neural models, such as ELMo (Peters et al. 2018), which create a token representation that integrates the surrounding context of the mention and concept name. We find a neural ranking approach paired with contextualized embeddings provides gains over a competitive baseline (Leaman et al. 2013). Additionally, we find that a pre-training step using synonyms from the ontology offers a useful initialization for the ranker.

You can open the

pre-recorded video

in a separate window.

NOTE: The SlidesLive video may display a random order of the authors.

The correct author list is shown at the top of this webpage.

Similar Papers

A Generate-and-Rank Framework with Semantic Type Regularization for Biomedical Concept Normalization

Dongfang Xu, Zeyu Zhang, Steven Bethard,

Improving Entity Linking through Semantic Reinforced Entity Embeddings

Feng Hou, Ruili Wang, Jun He, Yi Zhou,

Improving Candidate Generation for Low-resource Cross-lingual Entity Linking

Shuyan Zhou, Shruti Rijhwani, John Wieting, Jaime Carbonell, Graham Neubig,

Learning Interpretable Relationships between Entities, Relations and Concepts via Bayesian Structure Learning on Open Domain Facts

Jingyuan Zhang, Mingming Sun, Yue Feng, Ping Li,